Optimized llama.cpp GGUF quants of Qwen 3.5 27b made for 16gb VRAM cards.

Made using GGUF-Tool-suite! by Thireus, tuned by me :) (Gammaception)

Best 16 gb config for GC IQ3_M: (headless 64k ctx kv-cache@(q8_0,q8_0), -ub 256, q8_0 mmproj). Following official sampling parameters recommended, along with reasoning budget + message

EDIT 24/03/26: Updated GGUF to fix coding quality, new charts

EDIT 03/04/26: New variants with better quality ratios, update charts

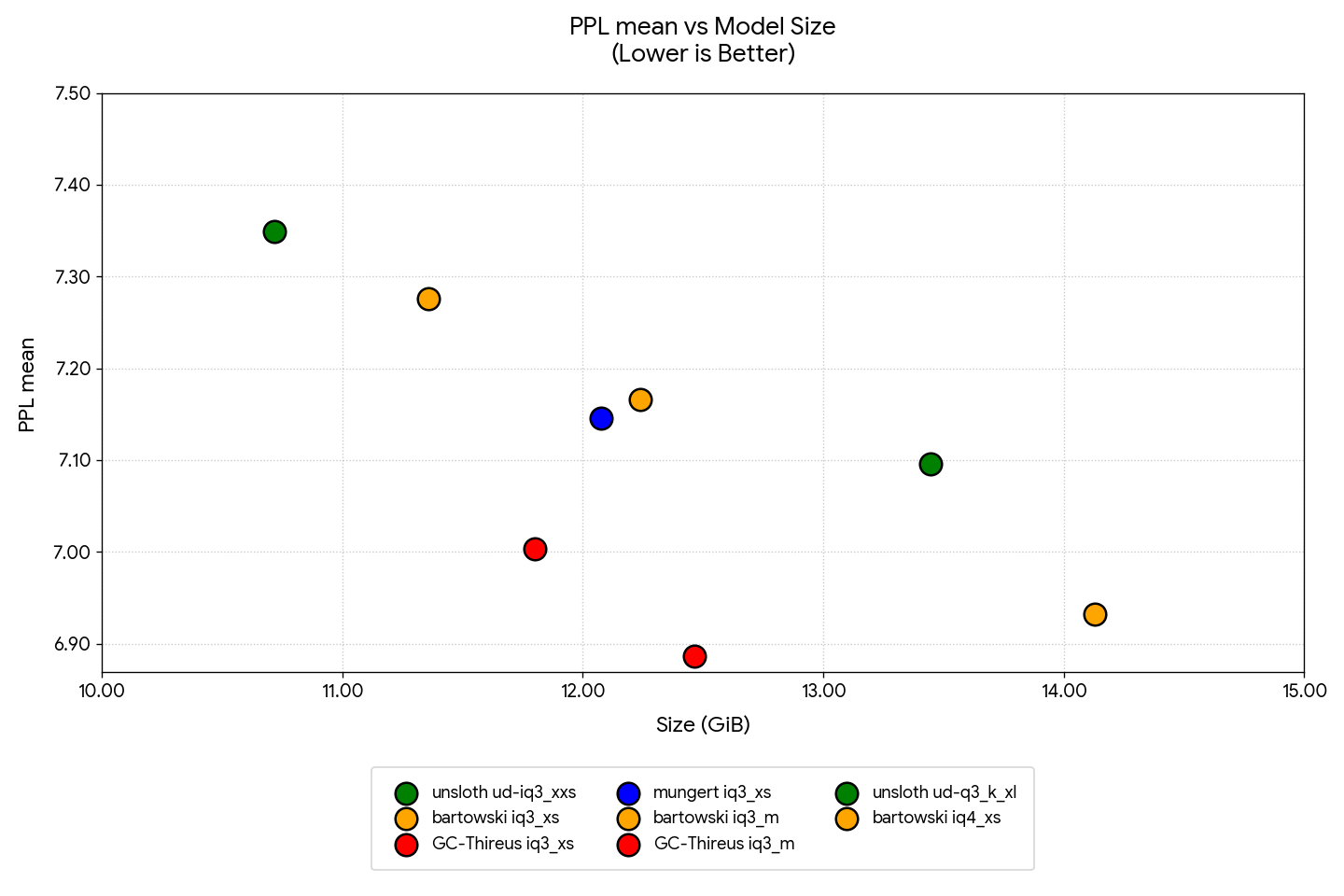

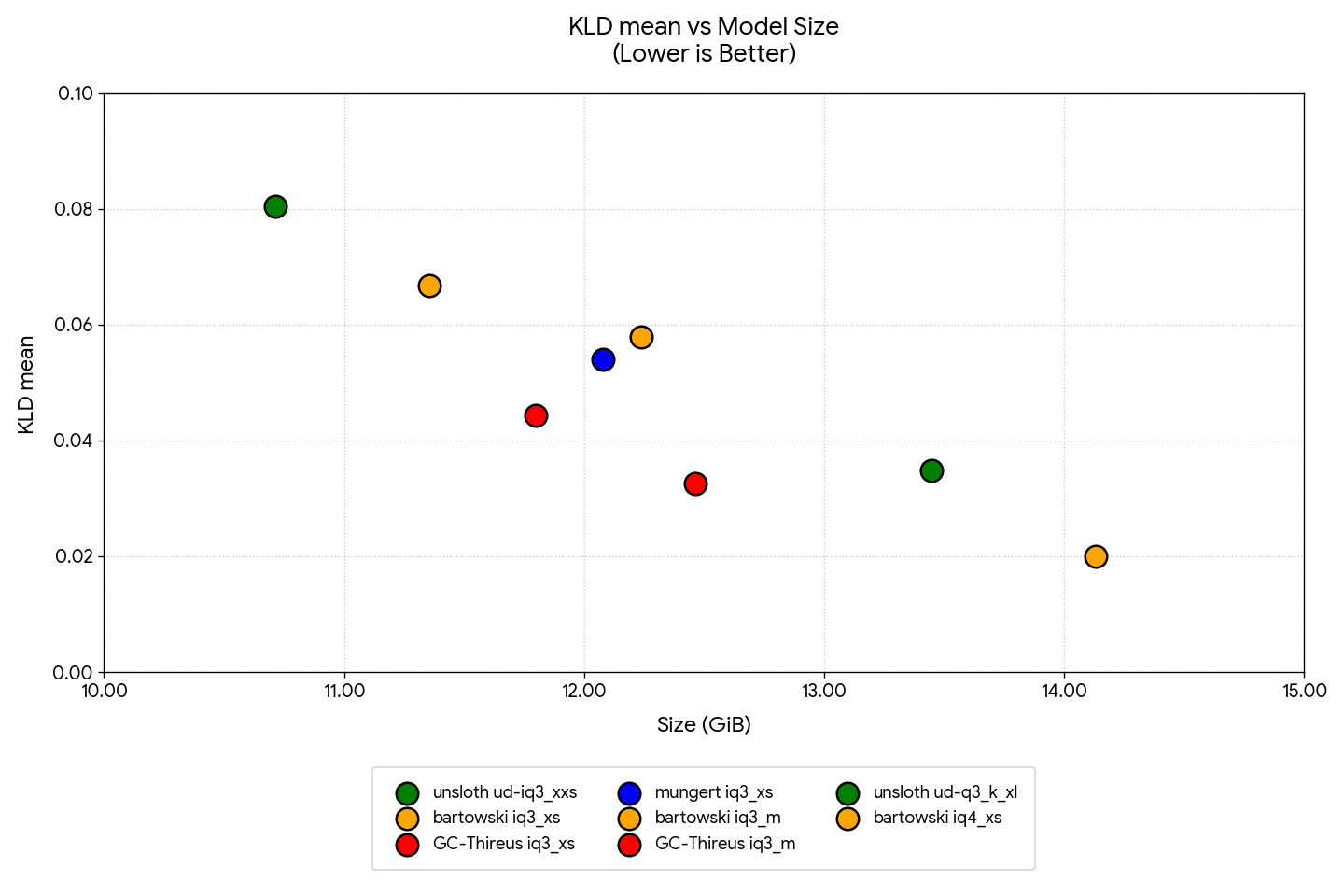

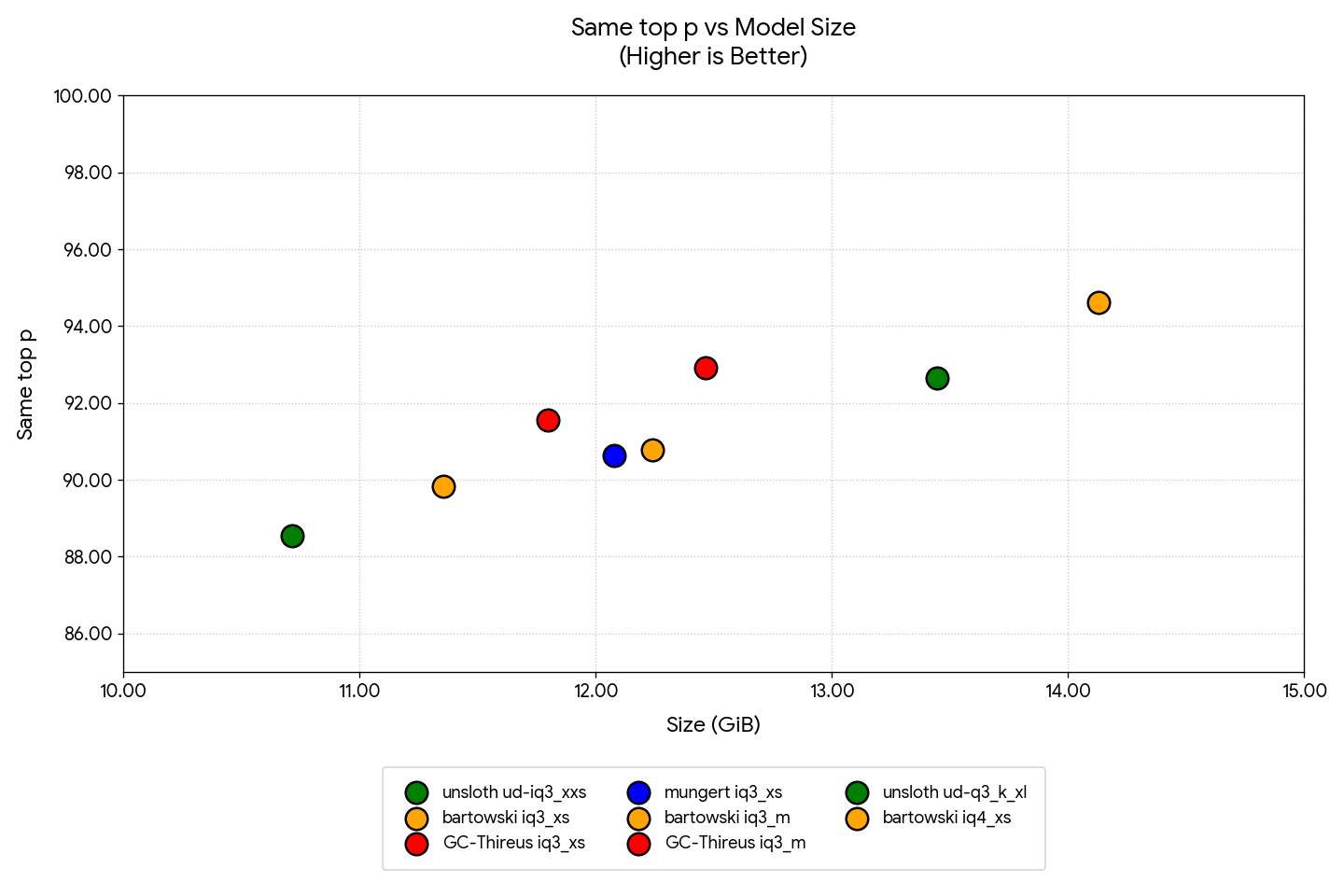

| unsloth ud-iq3_xxs | bartowski iq3_xs | GC-Thireus iq3_xs | mungert iq3_xs | bartowski iq3_m | GC-Thireus iq3_m | unsloth ud-q3_k_xl | bartowski iq4_xs | |

|---|---|---|---|---|---|---|---|---|

| Size (GiB) | 10.716 | 11.357 | 11.800 | 12.078 | 12.240 | 12.465 | 13.447 | 14.130 |

| PPL mean | 7.349445 | 7.275691 | 7.003592 | 7.146062 | 7.166074 | 6.886104 | 7.095870 | 6.932149 |

| KLD mean | 0.080486 | 0.066744 | 0.044320 | 0.053966 | 0.057891 | 0.032533 | 0.034783 | 0.019950 |

| Same top p | 88.546 | 89.837 | 91.560 | 90.625 | 90.782 | 92.926 | 92.656 | 94.610 |

kl logits from unsloth ud-q6_k_xl (best i can fit), wikitext-2 512ctx bf16 kv

- Downloads last month

- 3,834

Hardware compatibility

Log In to add your hardware

3-bit

Inference Providers NEW

This model isn't deployed by any Inference Provider. 🙋 Ask for provider support

Model tree for Gammaception/Qwen3.5-27B-Thireus-16gb-optimized-GGUF

Base model

Qwen/Qwen3.5-27B