Naming notice (2026-04-10). The "HLWQ" technique used in this model is being rebranded to HLWQ (Hadamard-Lloyd Weight Quantization). The change is only the name; the algorithm and the weights in this repository are unchanged.

The rebrand resolves a name collision with an unrelated, earlier KV cache quantization method also named HLWQ (Han et al., arXiv:2502.02617, 2025). HLWQ addresses weight quantization with a deterministic Walsh-Hadamard rotation and Lloyd-Max scalar codebook; Han et al.'s HLWQ addresses KV cache quantization with a random polar rotation. The two methods are technically distinct.

Existing loaders that load this repository by ID continue to work without changes. Future model uploads will use the HLWQ name.

Reference paper for this technique: arXiv:2603.29078 (v2 in preparation; v1 still uses the old name).

🍎 HLWQ MLX 4-bit — Qwopus3.5-9B-v3

HLWQ Q5 dequant → MLX 4-bit for Apple Silicon inference.

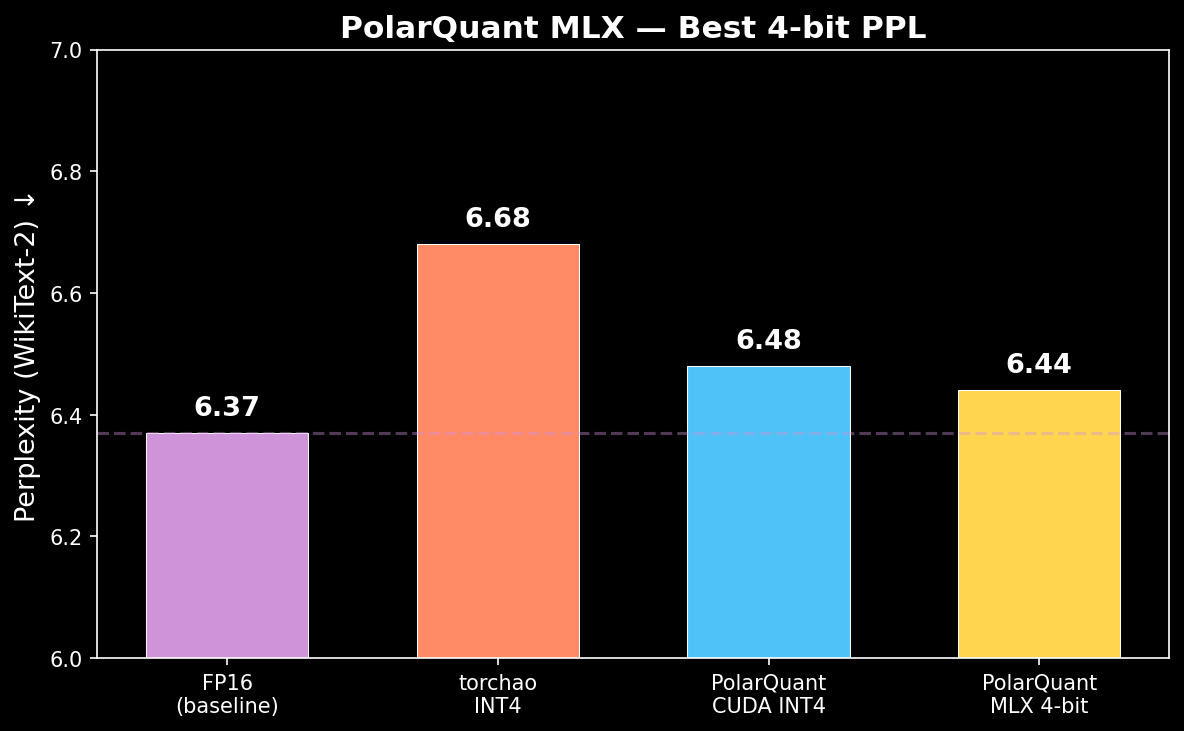

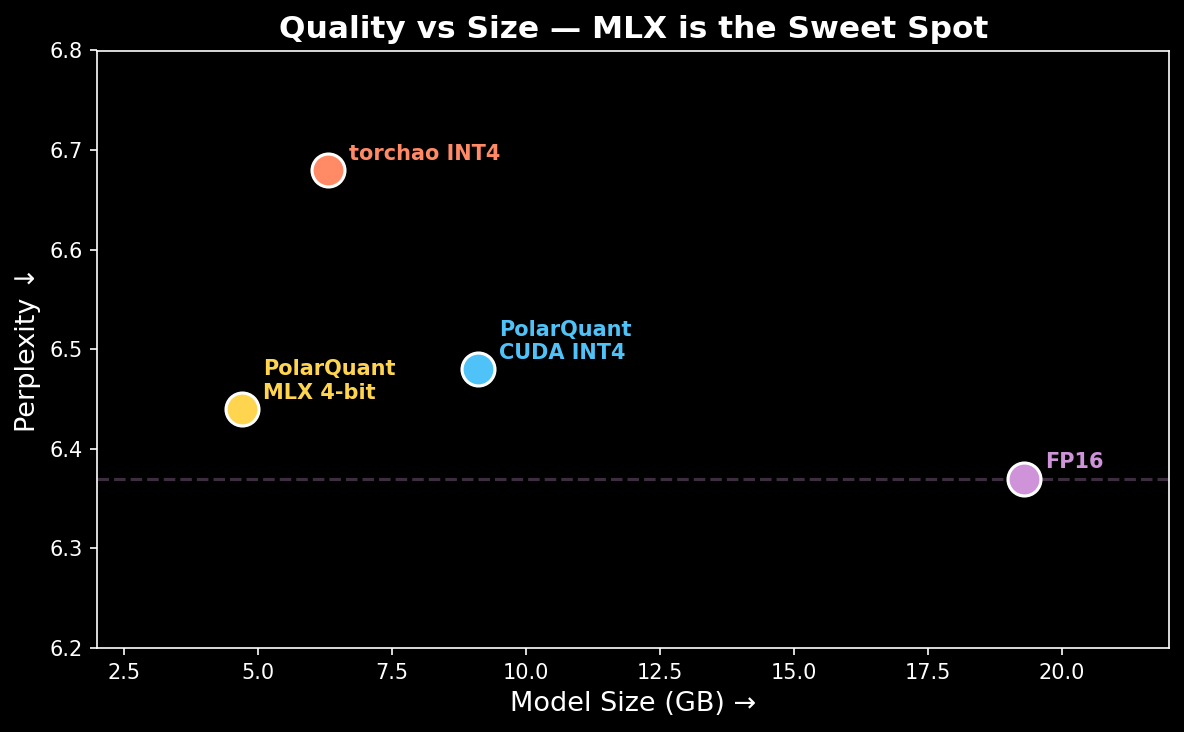

PPL 6.44 — better than CUDA torchao INT4 (6.48), only +0.07 from FP16 baseline (6.37).

🎯 Key Results

| Metric | Value |

|---|---|

| Perplexity | 6.44 (FP16: 6.37, CUDA INT4: 6.48, torchao absmax: 6.68) |

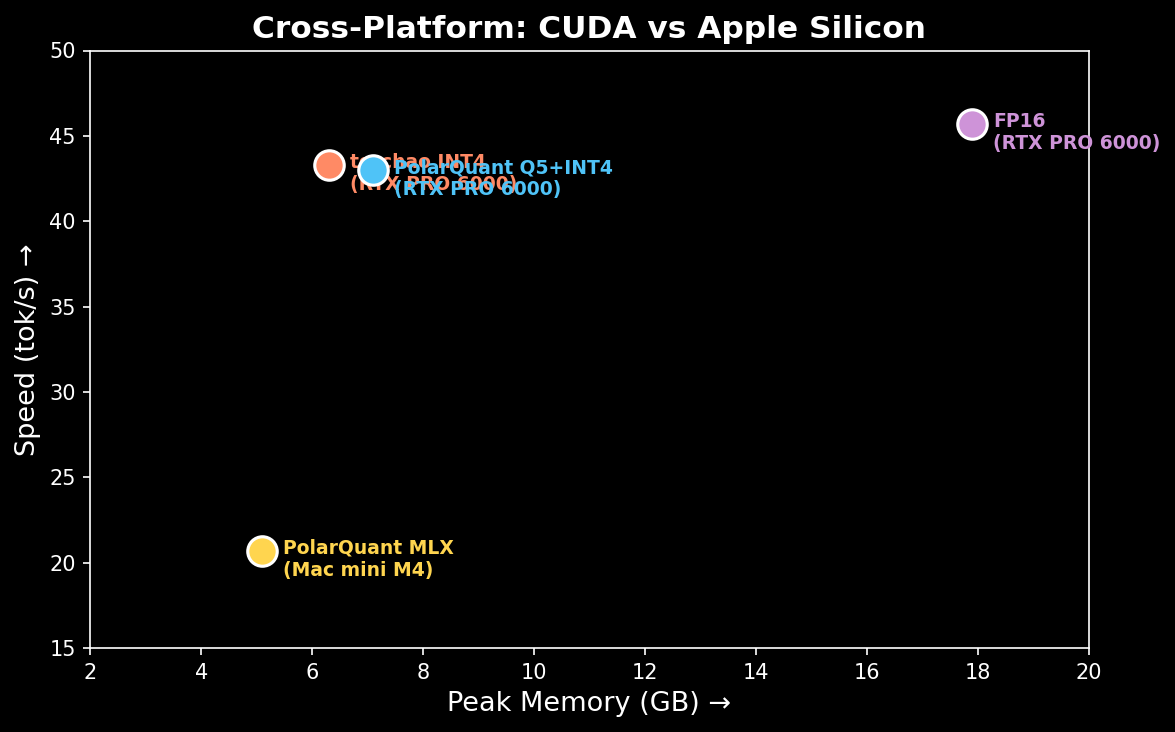

| Speed | 20.7 tok/s (Mac mini M4 16GB) |

| Memory | 5.1 GB peak |

| Format | MLX 4-bit (4.5 bpw, group_size=64) |

| Size | 4.7 GB |

📊 Benchmark Comparison

| Platform | Method | PPL ↓ | tok/s | Memory |

|---|---|---|---|---|

| RTX PRO 6000 Blackwell | FP16 baseline | 6.37 | 45.7 | 17.9 GB |

| Mac mini M4 16GB | HLWQ MLX 4-bit | 6.44 | 20.7 | 5.1 GB |

| RTX PRO 6000 Blackwell | HLWQ Q5 + torchao INT4 | 6.48 | 43 | 7.1 GB |

| RTX PRO 6000 Blackwell | torchao INT4 (absmax) | 6.68 | 43.3 | 6.3 GB |

MLX 4-bit beats CUDA torchao on PPL (6.44 vs 6.48) at half the memory (5.1 vs 7.1 GB).

🚀 Quick Start

pip install mlx-lm

from mlx_lm import load, generate

model, tokenizer = load("caiovicentino1/Qwopus3.5-9B-v3-HLWQ-MLX-4bit")

response = generate(

model, tokenizer,

prompt="What is the sum of the first 10 prime numbers? Think step by step.",

max_tokens=500

)

print(response)

Or from CLI:

mlx_lm generate \

--model caiovicentino1/Qwopus3.5-9B-v3-HLWQ-MLX-4bit \

--prompt "Explain quantum computing" \

--max-tokens 300

🔧 How It Was Made

Base model (BF16) → HLWQ Q5 dequant (Hadamard + Lloyd-Max)

→ Save improved BF16 weights

→ mlx_lm convert --quantize --q-bits 4 --q-group-size 64

HLWQ dequant produces weights with lower quantization error than the original BF16. When MLX re-quantizes to 4-bit, it starts from a better baseline → better final quality.

🔬 Why MLX Beats CUDA on PPL

MLX 4-bit with group_size=64 has finer granularity than torchao INT4 with group_size=128. Combined with HLWQ's improved starting weights, this gives the best PPL of any 4-bit method tested.

🔗 Resources

📖 Citation

@misc{polarquant2025,

title={HLWQ: Hadamard Rotation + Lloyd-Max Optimal Quantization for LLMs},

author={Caio Vicentino},

year={2025},

url={https://github.com/caiovicentino/eoq-quantization}

}

🙏 Acknowledgements

- Base model: Jackrong/Qwopus3.5-9B-v3

- MLX framework: Apple MLX

- Mathematical foundation: Walsh-Hadamard Transform + Lloyd-Max algorithm (1982)

- Downloads last month

- 1,867

4-bit

Model tree for caiovicentino1/Qwopus3.5-9B-v3-HLWQ-MLX-4bit

Base model

Qwen/Qwen3.5-9B-Base